Record the Policy.Arn in the command output, you will need it in the next step: aws iam create-policy \ Next, create an IAM policy named ALBIngressControllerIAMPolicy to allow the ALB Ingress controller to make AWS API calls on your behalf. Next, let’s deploy the AWS ALB Ingress controller into our EKS cluster using the steps below.įirst off, deploy the relevant RBAC roles and role bindings as required by the AWS ALB Ingress controller: kubectl apply -f Learn more about IAM Roles for Service Accounts in the Amazon EKS documentation. Now create an IAM OIDC provider and associate it with your cluster: eksctl utils associate-iam-oidc-provider -cluster=attractive-gopher -approve Next, create the EKS cluster with cluster name attractive-gopher: eksctl create cluster -name=attractive-gopher

What is kubernetes ingress install#

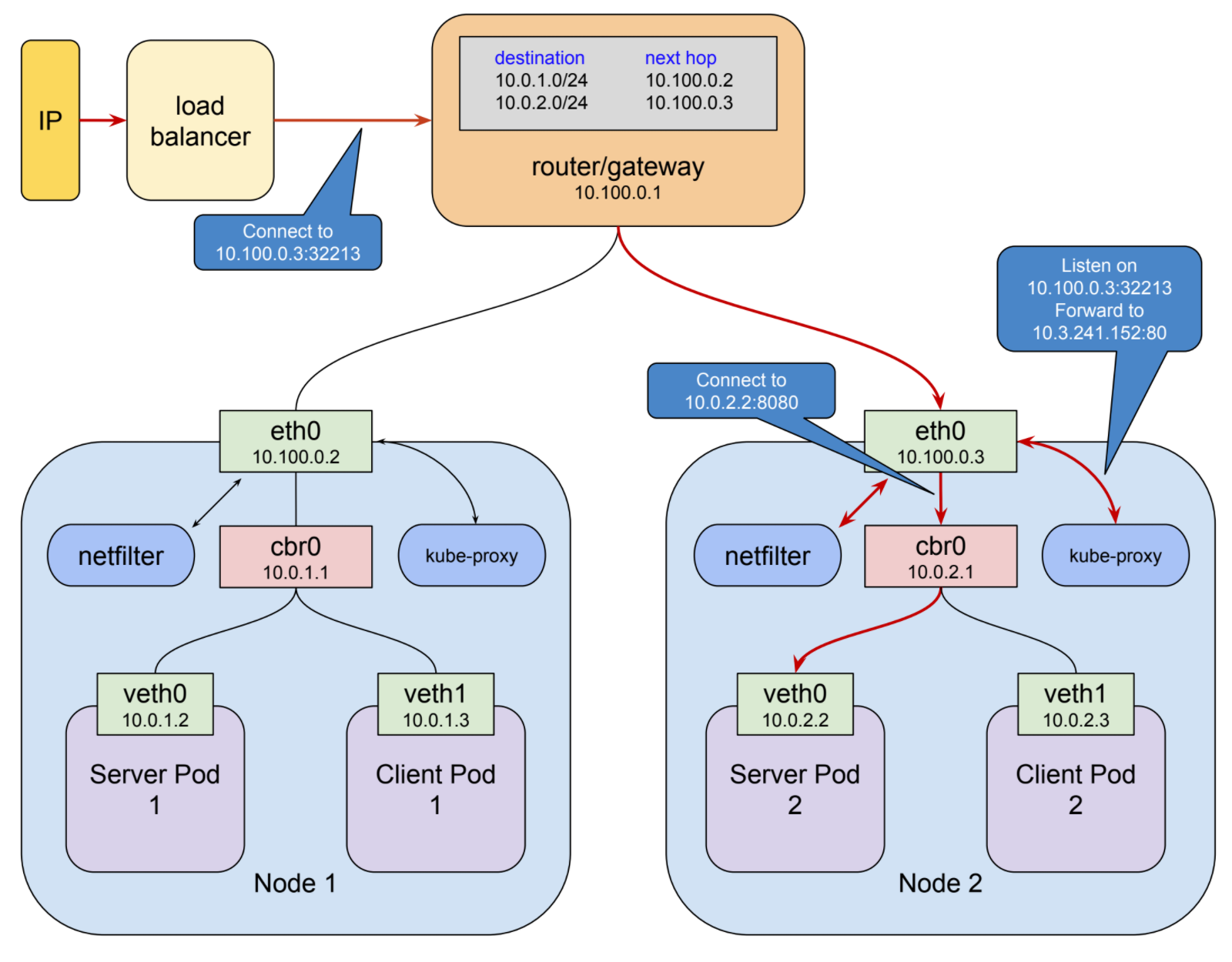

For this you can use, for example, Homebrew on macOS: brew install weaveworks/tap/eksctl Deploy Amazon EKS with eksctlįirst, let’s deploy an Amazon EKS cluster with eksctl. Note: If you are using the ALB ingress with EKS on Fargate you want to use ip mode. To use this mode, the networking plugin for the Kubernetes cluster must use a secondary IP address on ENI as pod IP, also known as the AWS CNI plugin for Kubernetes. ip mode: Ingress traffic starts from the ALB and reaches the pods within the cluster directly.Traffic is then routed to the pods within the cluster. instance mode: Ingress traffic starts from the ALB and reaches the NodePort opened for your service.Users can explicitly specify these traffic modes by declaring the /target-type annotation on the Ingress and the service definitions. This ensures that traffic to a specific path is routed to the correct TargetGroup created.ĪWS ALB Ingress controller supports two traffic modes: instance mode and ip mode.

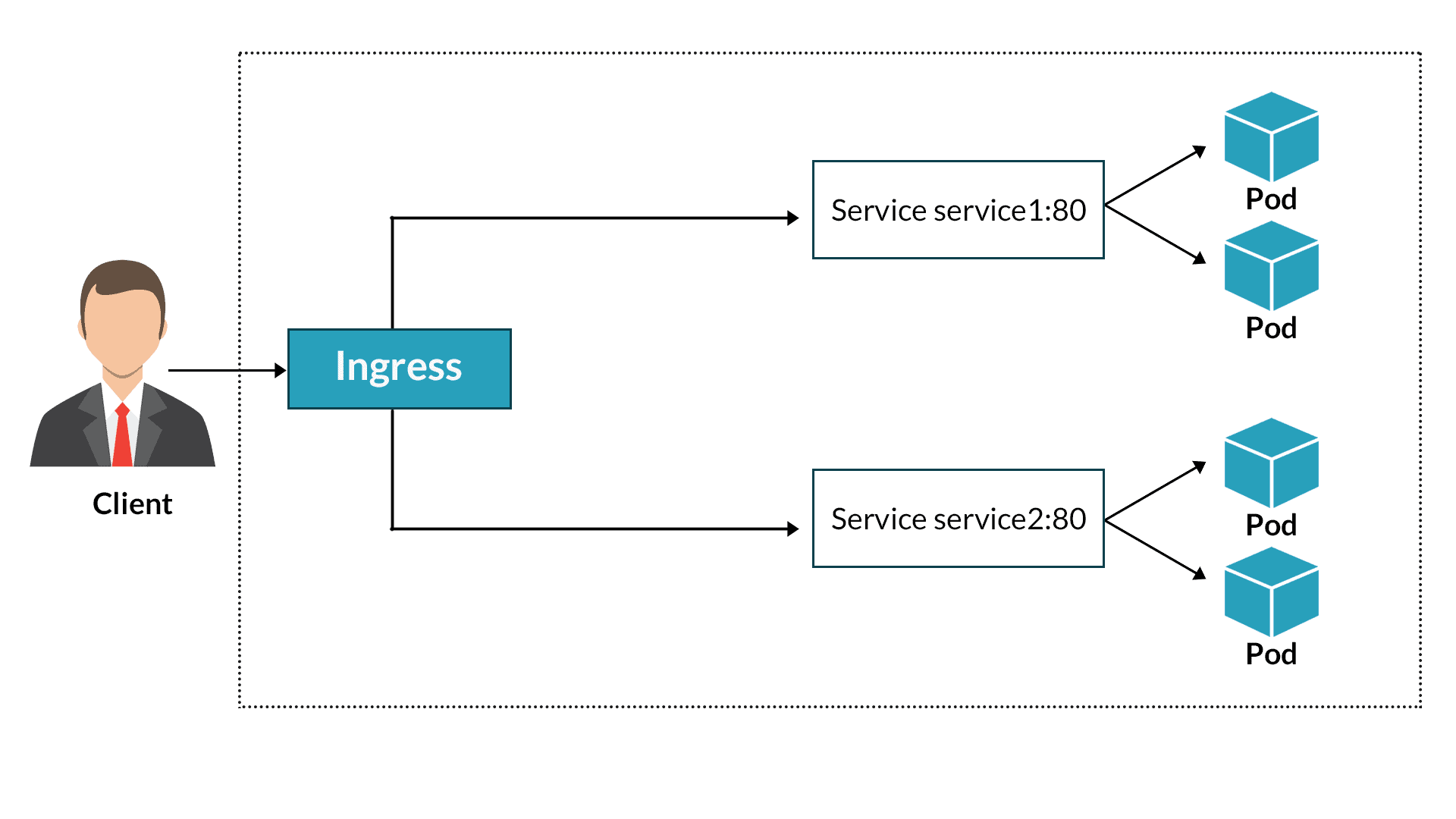

Rules are created for each path specified in your Ingress resource.If no port is specified, sensible defaults ( 80 or 443) are used. Listeners are created for every port specified as Ingress resource annotation.TargetGroups are created for each backend specified in the Ingress resource.An ALB is created for the Ingress resource.When it finds Ingress resources that satisfy its requirements, it starts the creation of AWS resources. The controller watches for Ingress events from the API server.The Ingress resource routes ingress traffic from the ALB to the Kubernetes cluster.įollowing the steps in the numbered blue circles in the above diagram: The following diagram details the AWS components that the aws-alb-ingress-controller creates whenever an Ingress resource is defined by the user. How Kubernetes Ingress works with aws-alb-ingress-controller NodePort: When a user sets the Service type field to NodePort, Kubernetes allocates a static port from a range and each worker node will proxy that same port to said Service.We will use the following acronyms to describe the Kubernetes Ingress concepts in more detail: The AWS ALB Ingress controller works on any Kubernetes cluster including Amazon Elastic Kubernetes Service ( Amazon EKS). The Ingress resource uses the ALB to route HTTP(S) traffic to different endpoints within the cluster. The open source AWS ALB Ingress controller triggers the creation of an ALB and the necessary supporting AWS resources whenever a Kubernetes user declares an Ingress resource in the cluster. ALB supports multiple features including host or path based routing, TLS (Transport Layer Security) termination, WebSockets, HTTP/2, AWS WAF (Web Application Firewall) integration, integrated access logs, and health checks. Amazon Elastic Load Balancing Application Load Balancer (ALB) is a popular AWS service that load balances incoming traffic at the application layer (layer 7) across multiple targets, such as Amazon EC2 instances, in a region.

Kubernetes Ingress is an API resource that allows you manage external or internal HTTP(S) access to Kubernetes services running in a cluster. You can also learn about Using ALB Ingress Controller with Amazon EKS on Fargate. Note: This post has been updated in January, 2020, to reflect new best practices in container security since we launched native least-privileges support at the pod level, and the instructions have been updated for the latest controller version.